The bottleneck isn't building.

It's knowing what works.

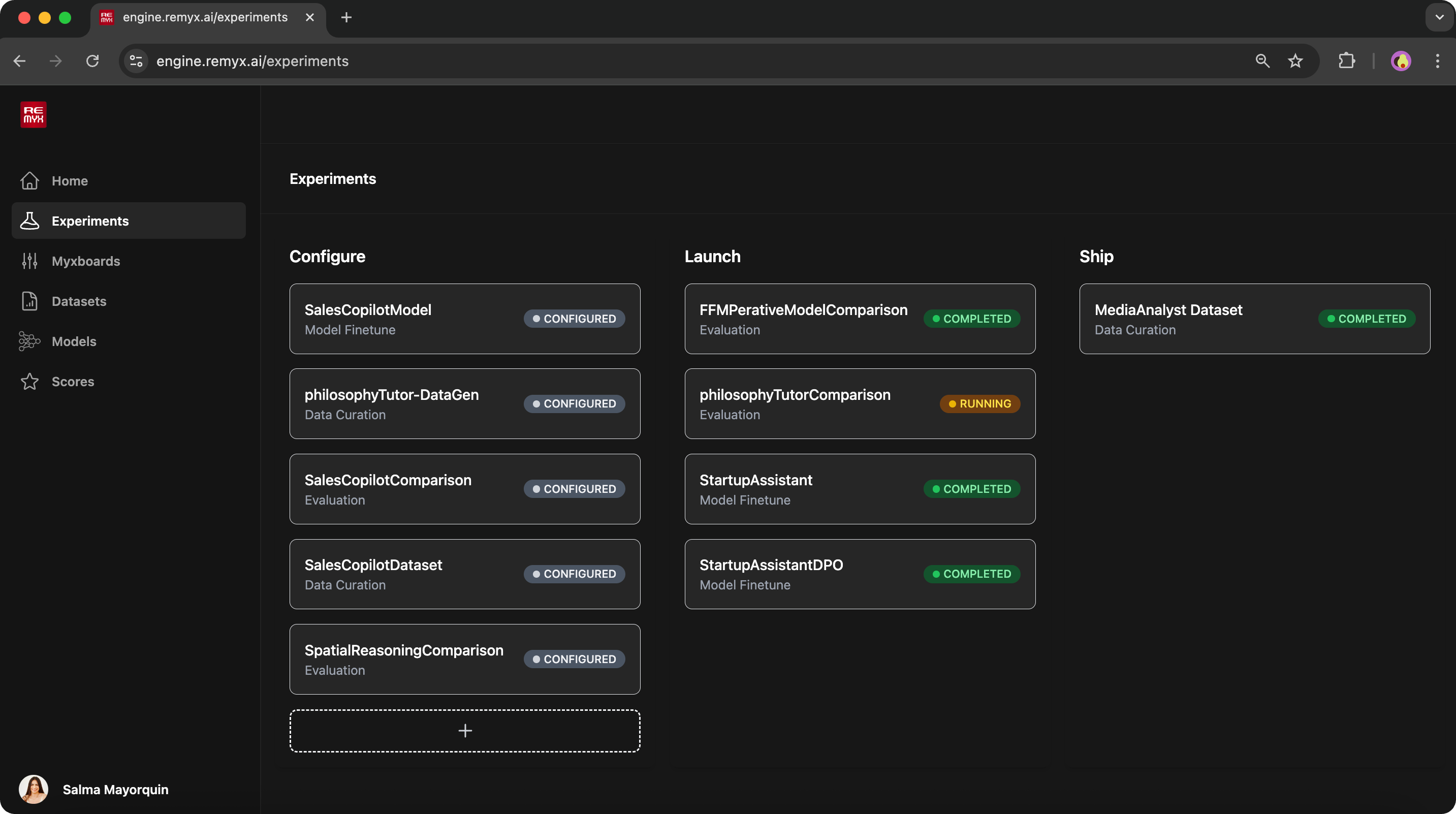

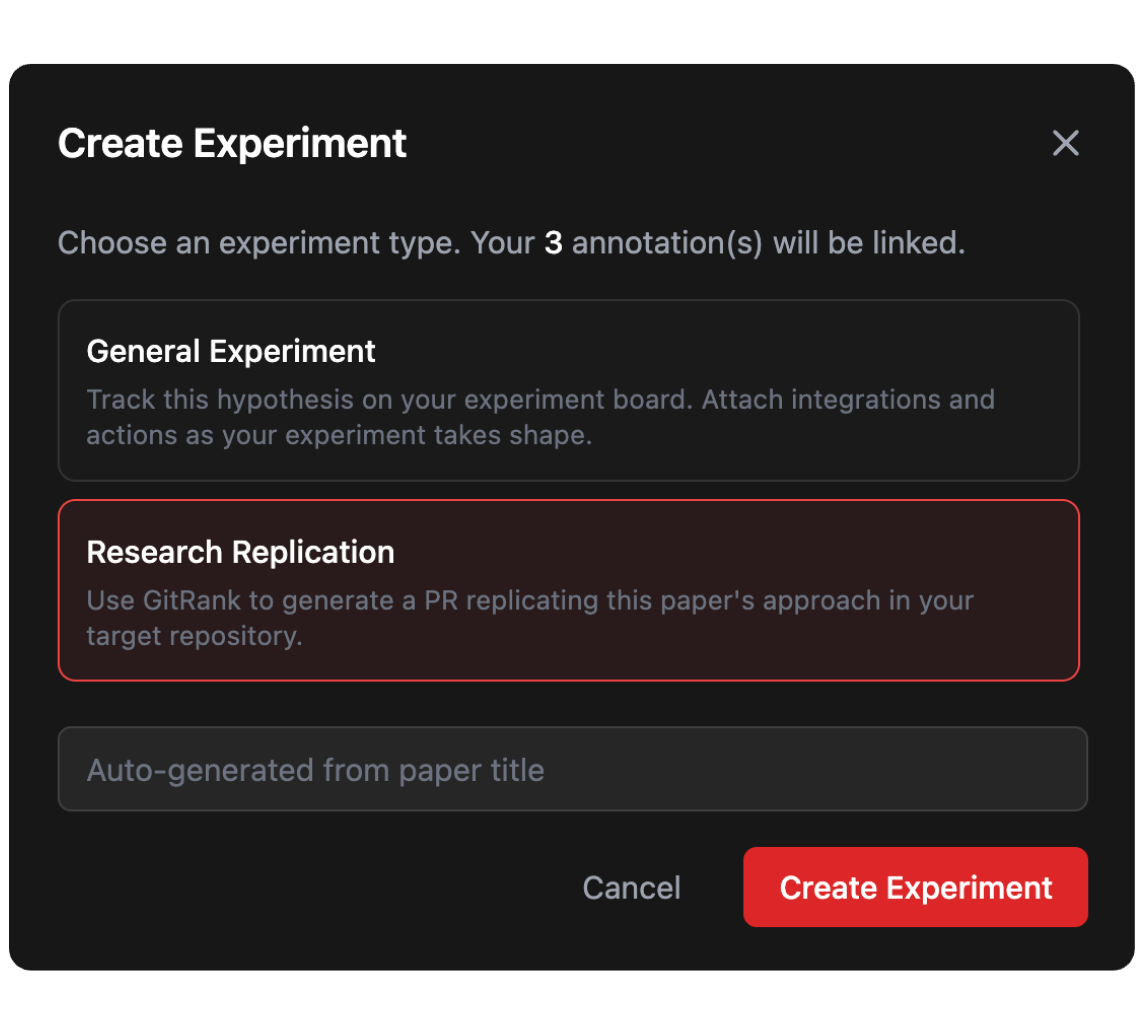

Remyx gives your team a systematic way to discover what's relevant, test what's promising, and validate what improves production.

Idea to Production, Systematically

Experiment with confidence.

Integrate discovery, building, and validation.

Discover

Generate environments from relevant ideas.

Build

Turn ideas into a testable changes.

Validate

Stop shipping based on benchmarks and test against actual use.

Learn what works.

Remyx helps engineers test more ideas and helps leads know which ones drive improvements.

For ML Engineers

Keeping up with AI advances is a full-time job. Most publications don't ship production-ready code. Adapting an idea to your codebase takes days before you know if it's worth it.

For Team Leads

Your team is testing ideas, but without a way to validate ideas scientifically you can't tell what's working, what's been tried, or what to prioritize next.

Built by practitioners, for teams building at the frontier

A team of mathematicians and award-winning ML innovators with a decade of experience applying AI in robotics, healthcare, content recommendation, and enterprise data/ml infrastructure.

Applied Mathematics, UC Berkeley. Former Solutions Architect at Databricks advising MLOps strategy from startups to Fortune 500. Award-winning ML innovator recognized by NVIDIA's developer community.

UC Berkeley. 10+ years applying ML in healthcare, robotics, and content recommendation at Riot Games, Tubi, Robust.AI. Open-source tools cited by Google DeepMind and used in peer-reviewed research.

Talks, Pods & Writing

Conference talks, podcast conversations, and field notes on how AI teams go from experiment to production.

Active in the Research Community Open Source

We contribute open-source tools, datasets, and benchmarks across AI domains and the research community builds on them.

Follow us on Substack

Technical deep-dives, experiment logs, and lessons learned from the founders of Remyx AI.

Ready to stop guessing?

Start exploring relevant research and run your first experiment in minutes.